Google AI Mode SEO: A Practical Framework for 2026

Google AI Mode SEO works when pages solve a full user task with clear structure, explicit evidence, and citations that are easy for retrieval systems to trust. Teams that combine technical cleanliness, entity consistency, and section-level answer formatting are better positioned to capture both AI citations and high-intent clicks.

Google AI Mode SEO playbook for 2026: framework, measurement model, and execution checklist to win citations and qualified organic traffic.

Google AI Mode SEO should start with the exact query surface users now see: conversational prompts, multi-turn refinement, and intent shifts inside one session. If your page only targets a narrow phrase and does not resolve adjacent questions, it can still rank traditionally but fail to earn inclusion in synthesized answers. This is why many teams are now pairing intent mapping with section-level answer design and evidence-first writing.

The core principle has not changed: Google still points publishers to Search Essentials and helpful, people-first content. What has changed is the interaction model. Instead of one query and one click, users ask layered questions, compare options, and test assumptions inside the interface. That increases the value of pages that combine definition, method, examples, constraints, and tradeoffs in one coherent document.

What is Google AI Mode and why does it change SEO workflows?

Google AI Mode is best treated as a conversational retrieval layer, not a replacement for all classic search behavior. It is designed for exploration, clarification, and follow-up questions. For SEO teams, the operational impact is that a single page now competes on answer utility across multiple adjacent intents, not only on exact term matching.

Google Search Central guidance for AI features emphasizes that the same foundational best practices still apply, including technical accessibility, original value, and clear page purpose. If your baseline is weak, AI-oriented formatting will not compensate for it. If your baseline is strong, AI-oriented formatting improves how systems extract and cite your material.

| Search Surface | User Behavior | SEO Priority |

|---|---|---|

| Classic SERP | Single-query comparison | Relevance, snippet clarity, CTR |

| AI Overviews | Fast summary validation | Summary-friendly structure and evidence |

| AI Mode | Multi-turn decision journey | Task-complete content and citation-ready sections |

The practical move is not to create a separate AI Mode content silo. Instead, upgrade high-value pages so each major section can answer a plausible follow-up question. The page should work as a linear article and as a modular answer source.

How do you optimize content architecture for Google AI Mode SEO?

Architecture is where most teams underperform. They publish many pages that each answer a thin slice, then wonder why no page is cited consistently. AI Mode tends to reward pages that connect definitions, procedure, thresholds, exceptions, and implementation details in one place.

Build answer blocks, not just paragraphs

For each major section, use a predictable pattern: direct answer, why it matters, concrete method, and a boundary condition. This makes the section independently useful when surfaced out of context. It also reduces ambiguity for both users and ranking systems.

Use hierarchy to mirror user follow-up behavior

Your H2s should match the questions users ask after the first answer, and your H3s should break implementation into steps. This aligns with practices in our writing for AI answers framework and the internal linking model used to reinforce topical authority.

Control duplication before it becomes a quality problem

Conversational interfaces can expose near-duplicate pages quickly. If your site has three pages targeting the same intent with minor wording changes, consolidate or canonically align them. Use the governance controls in our duplicate-content prevention guide and canonical implementation checklist.

Strong AI Mode performance is usually an outcome of clear information design, not a trick in metadata.

Which evidence and citation signals matter most for AI Mode citations?

Evidence density matters because AI answers synthesize claims across sources. A page that states recommendations without verifiable support may still look polished but is less reliable as a citation candidate. Your goal is to make every important assertion traceable to either primary documentation or transparent methodology.

Use source-backed claim blocks

Pair key claims with links to authoritative references like Google Search Central documentation, official product docs, standards bodies, or original datasets. This approach is also aligned with our editorial pattern in adding citations to content.

Strengthen entity consistency across pages

Citation systems evaluate clarity of entities as much as paragraph quality. Keep organization, author, and topical identity consistent across pages using standardized terminology, linked references, and structured data where relevant. See organization schema guidance and entity consistency workflows.

Keep publication quality stable

If one article is deeply sourced and the next is shallow, trust signals become inconsistent. Use repeatable QA checkpoints for sourcing, structure, factual precision, and linking. This is why teams often combine a source pack with an editorial QA scorecard before publication.

How do you measure Google AI Mode SEO performance without bad assumptions?

Measurement is difficult because AI interfaces can influence demand and click paths in indirect ways. If you only watch one metric, you will overcorrect. A better model combines visibility proxies, engagement quality, and business outcomes while documenting release changes.

Build a three-layer KPI model

| Layer | Metrics | Cadence |

|---|---|---|

| Visibility | Queries, impressions, landing-page click share | Weekly |

| Quality | Engaged sessions, return rate, task-complete events | Weekly |

| Outcome | Leads, pipeline contribution, assisted conversions | Monthly |

This structure mirrors the approach in our SEO measurement playbook and avoids the common mistake of declaring success from raw visibility shifts without checking business quality.

Use annotations and holdouts

When you ship major page updates, record date, page set, changed sections, and expected outcome. If possible, keep a comparable control set untouched for two to four weeks. This produces cleaner interpretation than reacting to short-term volatility.

Segment by intent cluster, not only URL

Conversational search can redirect user journeys across multiple pages. Review performance by topic cluster in addition to URL-level reports so you can spot whether authority is moving to better assets or dispersing across duplicates.

What does a 90-day Google AI Mode SEO implementation plan look like?

A focused 90-day sprint outperforms broad rewrite programs. Choose 10 to 20 pages with strong commercial intent, then apply the same architecture and evidence standards to each. Keep scope narrow enough that QA is real, not aspirational.

| Phase | Weeks | Deliverable |

|---|---|---|

| Baseline and selection | 1-2 | Priority page set, KPI baseline, source standards |

| Structural upgrades | 3-6 | H2/H3 rewrite, answer blocks, evidence integration |

| Technical and internal links | 7-8 | Canonical QA, schema checks, cluster links |

| Measurement and iteration | 9-12 | Review trend shifts, update weak sections, expand set |

The strongest compounding effect usually comes from internal link clarity and section rewrites, not from adding net-new posts. Teams that improve existing pages first often see faster reliability in both traditional search performance and AI citation frequency.

If your stack is still inconsistent on crawl/index controls, start with technical SEO hygiene and indexing controls before scaling AI Mode experiments.

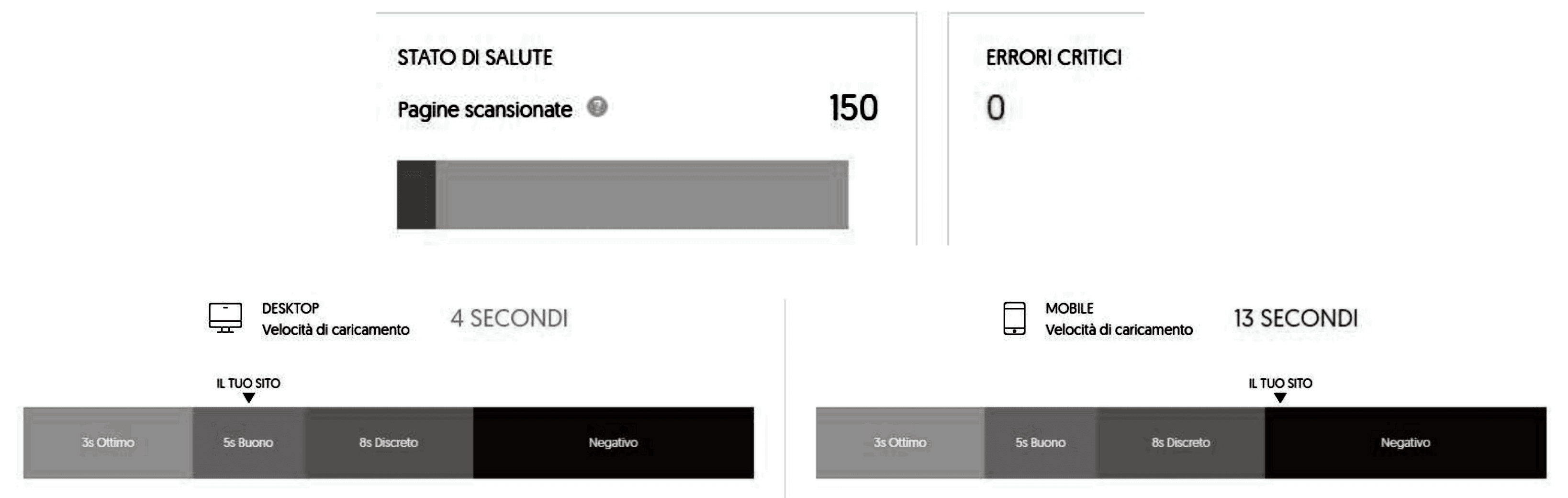

What are the highest-impact technical checks for Google AI Mode SEO?

Content quality will not carry pages that are hard to crawl, weakly canonicalized, or inconsistent across devices. Before scaling any AI Mode program, run a compact technical checklist on every candidate URL. Keep this checklist operational, not theoretical: each item should have an owner, validation method, and remediation SLA.

Crawl and indexing verification

Confirm that target pages are indexable and internally discoverable, then validate with Search Console URL-level checks. If a strong page is intermittently crawled or excluded, AI Mode visibility work becomes unstable because the source pool itself is unstable.

For baseline diagnostics, review Google's crawl/indexing documentation and inspect URL states in Search Console: How Search works and URL Inspection.

Canonical and duplication controls

Consolidate near-identical URLs that split authority. In practice, this means one canonical source for each intent cluster, with alternates either redirected or de-emphasized from indexation. Teams often lose weeks on AI-facing experiments when the core issue is unresolved duplication.

Schema and page clarity checks

Structured data does not guarantee visibility, but clean schema reduces ambiguity and improves machine readability. Validate syntax and required fields, then verify that on-page claims match structured values. Mismatches are small but cumulative trust leaks.

Image and media accessibility

Conversational search journeys frequently include visual context. Keep image filenames descriptive, captions useful, and alt text specific to what the image contributes. This supports both accessibility and extraction quality in AI-assisted answer surfaces.

Which mistakes cause most Google AI Mode SEO projects to stall?

Teams rarely fail because they chose the wrong prompt pattern. They fail because execution drifts between strategy, editorial, and technical owners. The following failure modes are consistent across enterprise programs and smaller content teams.

Mistake 1: Chasing novelty instead of query economics

Many teams prioritize trendy prompts over pages tied to meaningful pipeline. Start with query sets that already generate qualified sessions or high-conviction problem discovery, then improve those assets first. This keeps experimentation tied to outcomes rather than vanity metrics.

Mistake 2: Publishing too many thin pages

Thin pages increase maintenance cost and fragment authority. AI Mode optimization should usually reduce page count per topic by consolidating overlapping intent into stronger references with clearer sections and better evidence.

Mistake 3: Treating measurement as an afterthought

If instrumentation starts after publication, every conclusion is noisy. Define success windows, segment rules, and attribution logic before edits go live. Then review outcomes at fixed intervals rather than reacting daily.

Mistake 4: Ignoring editorial operations

Without workflow discipline, quality regresses quickly as output increases. Maintain source requirements, review gates, and ownership for updates. A steady publishing system beats sporadic bursts of high effort.

If your team is still standardizing process, combine this playbook with the AI-assisted workflow governance model and a content refresh attribution loop so improvements are repeatable.

Google's own guidance continues to emphasize useful, reliable, people-first content. That principle remains the safest operating policy while interfaces evolve: people-first content guidance.